|

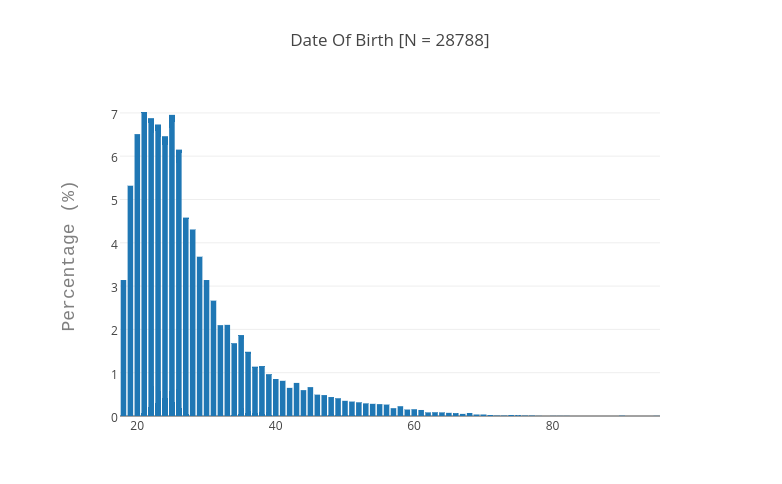

A while back I said I would share my thoughts on using Prolific Academic - in particular for people to try and figure out whether this could be a viable alternative to Amazon Mechanical Turk. I have been using MTurk on and off for about 3 years now. Notwithstanding the occasional grumpy reviewer who hates MTurk data (see below for a comment I was on the receiving end of), I still find this to be a pretty nifty method for getting good quality data. Ouch. Nevertheless, over the last few years, a few stories have come out that have started to worry me (and others) about the long-term viability of MTurk as a behaviour research platform. First are the rumours that new workers from non-US countries are routinely being turned away. Linked to that problem is the estimation that, despite boasting over 500,000 workers from 190+ countries, in reality the vast majority of HITs are completed by a much smaller sample of workers. One paper estimated that all researchers using MTurk are sharing a workforce of around 7,000 workers (i.e. only a few times larger than the participant pool at a typical university). In addition, many of these workers will have taken part in the standard studies (e.g. Dictator Games, Prisoner's Dilemmas) several times before. This non-naivete, coupled with the fact that not all research labs refrain from deceiving subjects (thereby effectively pissing in the shared pool) has led people to question to what extent the data collected from this online workforce is really representative of anything other than itself. Enter Prolific Academic. At the outset, this looks like a great proposition - a dedicated online platform that has been established explicitly with research in mind. So, unlike MTurk, which was co-opted for this task, Prolific Academic has been designed with researchers' wants and needs put first. And I have to say - there is a lot to like. First major advantage: Prolific collects and stores some pretty detailed demographic information from all participants upfront - so no more having to add the standard demographic questionnaire to your studies. This is handy for a few reasons - first, it means that you can post shorter and therefore cheaper experiments; second, there is less chance of participants getting bored by filling out the same information again and again on different tasks; and finally - most usefully - you can pre-screen participants on the basis of age, gender, education level and pretty much any other demographic variable of interest (e.g. phone operating system!) for your study. Pretty cool. The second thing I like about Prolific is the way they nudge (well, shove) researchers to pay participants a fair wage. When you post a task you have to estimate how long it will take - based on this estimation, you then also have to enter a baseline level of compensation that reflects the minimum hourly wage. I like this feature. One thing that could make it better, however, would be if you were able to include the minimum bonus that you will pay subjects (depending on how they perform in the task) as part of this estimation. This would ensure that subjects were getting a fair wage, while also allowing researchers to maximise the potential of their research budget. I also like the fact that Prolific suggests you send partial payments to workers who participate but somehow fail to complete the task. Some of the things I have experienced that I hope are under development - or that would put me off using it again are the following: Speed - sometimes the site is sooooo sloooooow. Not too sure why. But I don't think I'm the only one to have experienced this. Bulk reject: this is basically a bit of a faff. There is no way to screen through your submissions and bulk reject all those who started but didn't complete (which, in my limited experience, seems to be quite a few). So you have to reject each incomplete or substandard submission individually which (given the speed issue above) can be seriously time consuming. Also there is a limit on the number of submissions you can reject as part of any one study. I totally get the rationale for this - and so far the team at Prolific have been super helpful at bulk rejecting incomplete submissions on my behalf - but this is something that has to be given over to the researchers to manage for themselves if the site is to be viable. One option would be to have a partial payment button which allows researchers to reject incomplete or substandard work but sends a partial payment that could be determined either by the researcher or adjusted to the time that the worker spent on the study. Worker demographics: I've just tried to run a multi-country study on Prolific and - as with MTurk - there are some countries that are very well represented (e.g. USA, UK, India, Brazil) while others just don't have the workforce to make it happen. Hopefully this will be something that picks up as the site gains traction. So overall, I would say that I am cautiously optimistic about using Prolific as an alternative to MTurk. For the time being, I would say that the viable samples are going to be similar to those that can be collected on MTurk - and until it is possible for researchers to manage their own submissions (specifically, rejections) then I will probably stick with MTurk. But the minute this feature becomes available I would definitely consider using it much more.

4 Comments

|

Nichola Raihani

is a Royal Society University Research Fellow based in the Department of Experimental Psychology at University College London. Archives

April 2016

Categories |

RSS Feed

RSS Feed